Why Needs Assessments Elevate Learning Programs

Requirements for the Learners Journey

Estimated reading time: 4–5 minutes

During recent chats and discussions on Substack, it was clear that some learning institutions might benefit from performing a needs assessment of learning requirements relevant to their institutions’ learning goals.

Traditional needs assessments often begin with “What content is missing?” A capabilities-based needs assessment (CBNA) asks: “What do learners need to be able to do, in varied, evolving contexts, and where are the functional gaps?” What are the standardized compliance items required for the journey? This reframing prioritizes transfer, adaptability, and long-term value across K–12, independent schools, and adult learning/workforce programs.

Capability vs. Competency

Competency: Demonstrable performance of a defined task under expected conditions.

Capability: Flexibility for integrating knowledge, skills, dispositions, tools, and judgment to address novel problems (see the OECD Learning Compass 2030).

Emphasizing capability helps align curriculum, instruction, and assessment toward adaptable performance rather than narrow task rehearsal.

Core Components of a Capabilities-Based Needs Assessment

Strategic Anchor: Link compliance requirements and other learning intentions to system, mission, or workforce goals.

Capability Map: Define a concise set (8–15) of high-impact, transferable capabilities (e.g., use of AI and LLMs, data reasoning, ethical decision-making, systems thinking).

Evidence Scan: Triangulate curriculum maps, classroom/learning observations, learner work samples, assessment data, stakeholder interviews, and (for adults) performance metrics.

Gap & Barrier Typology:

Gap analysis (what capabilities are being used now compared to your capability requirements)

Performance Barrier (tools, time, scheduling, assessment misalignment)

Root Cause Analysis: Use structured questioning (e.g., 5 Whys) before prescribing courses or technology.

Priority Matrix: Score gaps by Impact, Feasibility, Urgency, Equity.

Solution Architecture: Combine instructional redesign (rich tasks, rubrics), environmental support (job aids, scheduling shifts), coaching, and micro-credentials.

Measurement Loop: Leading indicators (formative rubric progress, engagement) and lagging indicators (achievement growth, completion, ROI, retention).

How This Strengthens Different Learning Contexts

Public Schools

Equity Targeting: Capability rubrics surface differential access to cognitively demanding tasks, informing resource allocation (aligned with meta-analytic findings on feedback and clarity).

Beyond Test Narrowness: Incorporates transferable reasoning, collaboration, digital, and data literacy.

More innovative Budget Use: Avoids scattershot initiatives; concentrates professional development on a shared capability taxonomy.

Community Alignment: Connects emerging local needs (e.g., clean energy analytics or GIS literacy) with interdisciplinary projects.

Private/Independent Schools

Strategic Differentiation: A published capability map becomes part of the school’s value proposition.

Evidence for Stakeholders: Portfolios show growth in innovation, ethical reasoning, and global systems thinking.

Interdisciplinary Studios: Faculty co-design tasks that braid multiple capabilities (e.g., sustainability + computational modeling).

Admissions & Pathways: Capability evidence strengthens placement and scholarship narratives.

Adult/Workforce & Continuing Education

Rapid Reskilling: Aligns learning to evolving occupational or cybersecurity frameworks (e.g., NICE).

Micro-Credential Integrity: Badges tied to observable capability thresholds, not seat time.

Performance Focus: Reduces over-reliance on courses; integrates workflow support and coaching to improve transfer.

Digital Transformation Readiness: Prioritizes data literacy, AI collaboration practices, compliance judgment, and cross-functional teaming.

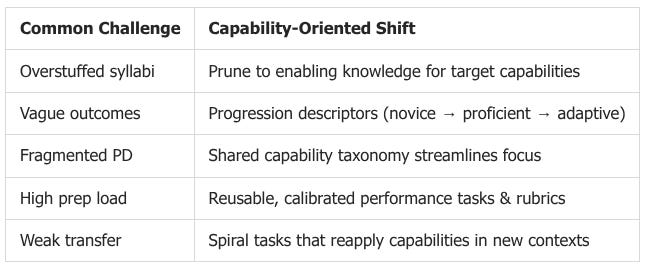

Supporting Teacher & Instructor Efficiency

Teacher clarity, formative assessment cycles, and feedback become more coherent when anchored to explicit capability progressions (supported by research on feedback effectiveness and formative assessment). This reduces grading volume (fewer, richer tasks) and increases time for actionable feedback.

Additional Benefits

Filters Initiatives: “Does this accelerate a mapped capability?” curbs initiative fatigue.

Better Data: Dashboards track capability progression, not just item correctness.

Cross-Disciplinary Coherence: Shared language (e.g., modeling, argumentation, evidence evaluation) unites departments.

AI Integration: This section clarifies where AI tools can scaffold (drafting, data organization) and where human mentorship remains central (ethical deliberation, complex judgment).

Mini Example

Context: Middle school STEM plateau despite full curriculum coverage.

Findings: Students follow procedural lab steps but cannot design or justify investigative approaches (systems inquiry gap).

Root Causes: Over-scripted labs; rubrics reward completion over reasoning; instructor discomfort with open-ended tasks.

Interventions:

Introduce “Design Justification Brief” structure.

Questioning strategies to elicit divergent hypotheses.

Replace four low-impact quizzes with two iterative design cycles.

Metrics: Rubric scores on problem framing and variable control; reflective journals coded for systems thinking indicators.

Result (pilot term): Increased rubric proficiency; reduced prep time via reusable scaffolds.

Quick Starter Checklist

Draft a one-page strategic anchor.

Build a first-pass capability map (10 ±2 entries).

List standardized compliance requirements

Collect multi-source evidence (curriculum, work samples, observations, analytics).

Classify gaps (capability vs. opportunity vs. performance barriers).

Prioritize with a scoring grid (Impact, Equity, Feasibility, Strategic Fit).

Prototype one integrated performance task + rubric.

Run a short feedback/moderation cycle (instructors + learner self-assessment).

Refine before widening the scope.

Takeaway

Sometimes, we need to get back to the basics. What do learners really need to learn in our unique environments? What is the best way for them to learn? Why is this in the best interest of the learner? How does this support the teacher or instructor? What are your thoughts?

References

Aurora Institute. (2019). Definition of competency-based education. https://aurora-institute.org/competencyworks/definition-of-competency-based-education/

Baldwin, T. T., & Ford, J. K. (1988). Transfer of training: A review and directions for future research. Personnel Psychology. https://doi.org/10.1111/j.1744-6570.1988.tb00632.x

Hattie, J. (2009). Visible Learning. Routledge. https://www.routledge.com/Visible-Learning/Hattie/p/book/9780415476188

Kaufman, R. A. (2006). Change, choices, and principles: Achieving better performance. Performance Improvement. (Wiley access) https://onlinelibrary.wiley.com/doi/10.1002/pfi.2006.4930450708

Le, C., Wolfe, R., & Steinberg, A. (2014). The Past and the Promise: Today’s Competency Education Movement. Jobs for the Future. https://www.jff.org/resources/past-and-promise-todays-competency-education-movement/

NIST. (2020). NICE Cybersecurity Workforce Framework (SP 800-181 Rev. 1). https://csrc.nist.gov/publications/detail/sp/800-181/rev-1/final

OECD. (2019). OECD Learning Compass 2030. https://www.oecd.org/education/2030-project/learning-compass-2030/

Phillips, J. J., & Phillips, P. P. (2016). Handbook of Training Evaluation and Measurement Methods (4th ed.). Routledge. https://www.routledge.com/Handbook-of-Training-Evaluation-and-Measurement-Methods/Phillips-Phillips/p/book/9781138194592

Prahalad, C. K., & Hamel, G. (1990). The core competence of the corporation. Harvard Business Review. https://hbr.org/1990/05/the-core-competence-of-the-corporation

Wiliam, D. (2011). Embedded Formative Assessment. Solution Tree. https://www.solutiontree.com/embedded-formative-assessment.html

ISTE. (2021). ISTE Standards for Students. https://www.iste.org/standards/iste-standards-for-students